In the highly competitive e-commerce environment, continuously optimizing website experience has become a key factor for success. According to VWO's 2023 E-commerce Trends Report, websites implementing systematic A/B testing saw an average increase in conversion rates of 21%. A/B testing, as a scientific method, allows you to validate hypotheses in a real-world environment, avoiding the risks of making decisions based on intuition. This guide will help you establish an effective testing process, making optimization efforts more efficient.

Identify high-value test elements

Not all tests are worth investing resources in. Methods for identifying high-value test elements include:

Analyze existing data : Use tools such as Google Analytics to identify pages with high exit rates and low conversion rates.

Heatmap analysis : Using tools such as Hotjar or Crazy Egg, observe how users interact with the page and discover elements that may be overlooked.

User feedback : Collect direct feedback through brief website surveys to understand the points of friction encountered by users.

Competitor analysis : Study the design elements and features adopted by industry leaders to identify gaps and opportunities.

Building an effective A/B testing process

Successful testing requires a rigorous methodology:

Define clear assumptions : Each test should be based on specific assumptions, such as "simplifying the checkout process will increase the completion rate by 15%".

Design test variants : Create the original version (A) and at least one variant (B), ensuring that only one variable is tested at a time to accurately determine the results.

Determine sample size : Use a sample size calculator to determine the number of visits required for the test. According to ConversionXL's research, at least 100-200 conversions are needed to draw reliable conclusions.

Perform a split test : Use professional tools such as Google Optimize, Optimizely, or VWO to randomly allocate traffic.

Set a testing cycle : Tests should cover at least one complete business cycle (usually 1-2 weeks) to capture various user behavior patterns.

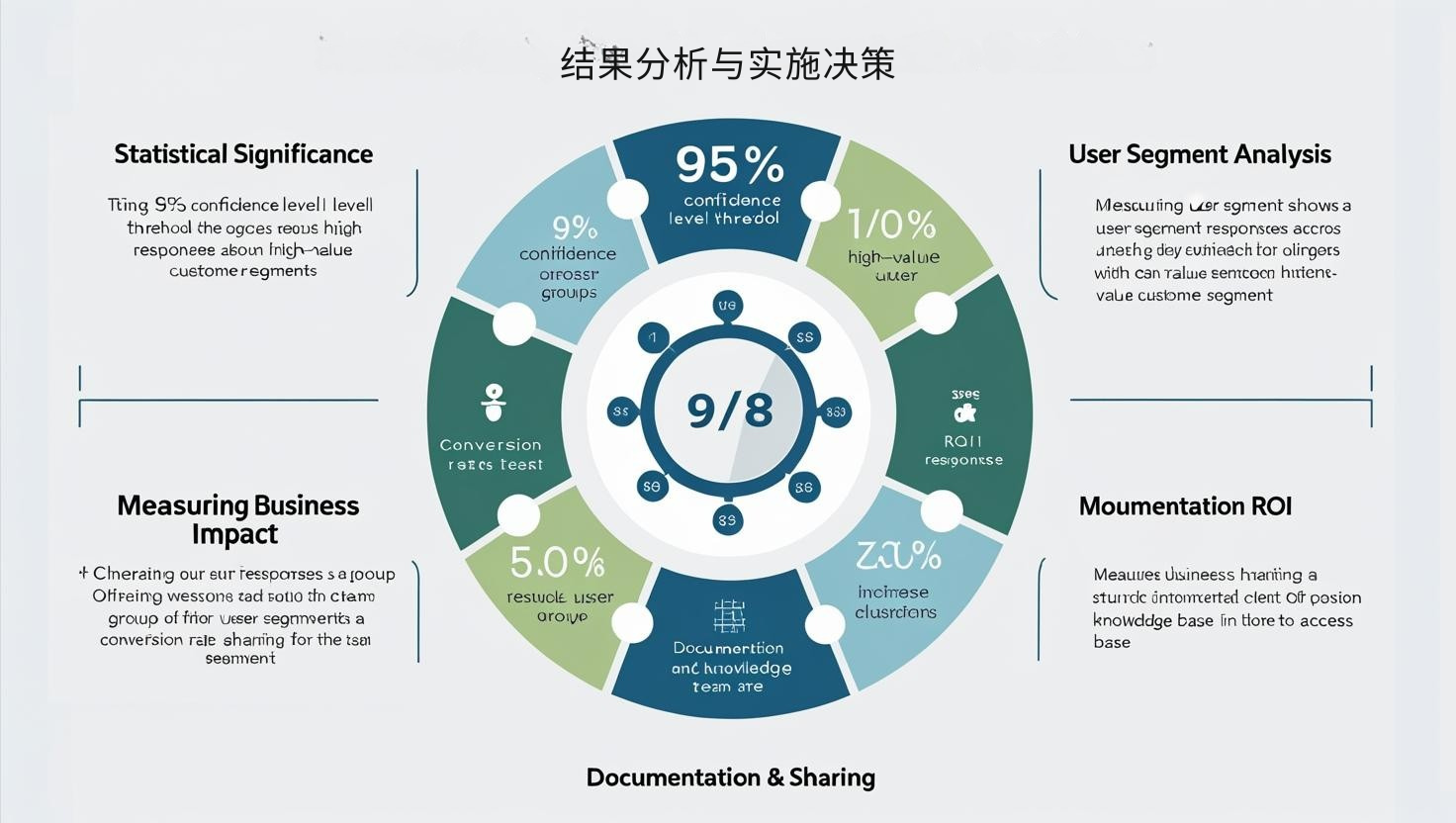

Results Analysis and Implementation Decisions

After the test, the key is to correctly interpret the data:

Statistical significance : Only results with a confidence level of 95% or higher are worth implementing.

Analyze user segments : Different user groups may react differently to the same change, with a particular focus on high-value customer groups.

Measure business impact : Translate conversion rate improvements into tangible revenue by calculating return on investment (ROI).

Recording and Sharing : Establish a test knowledge base to record all test details and results for easy learning and reference by the team.

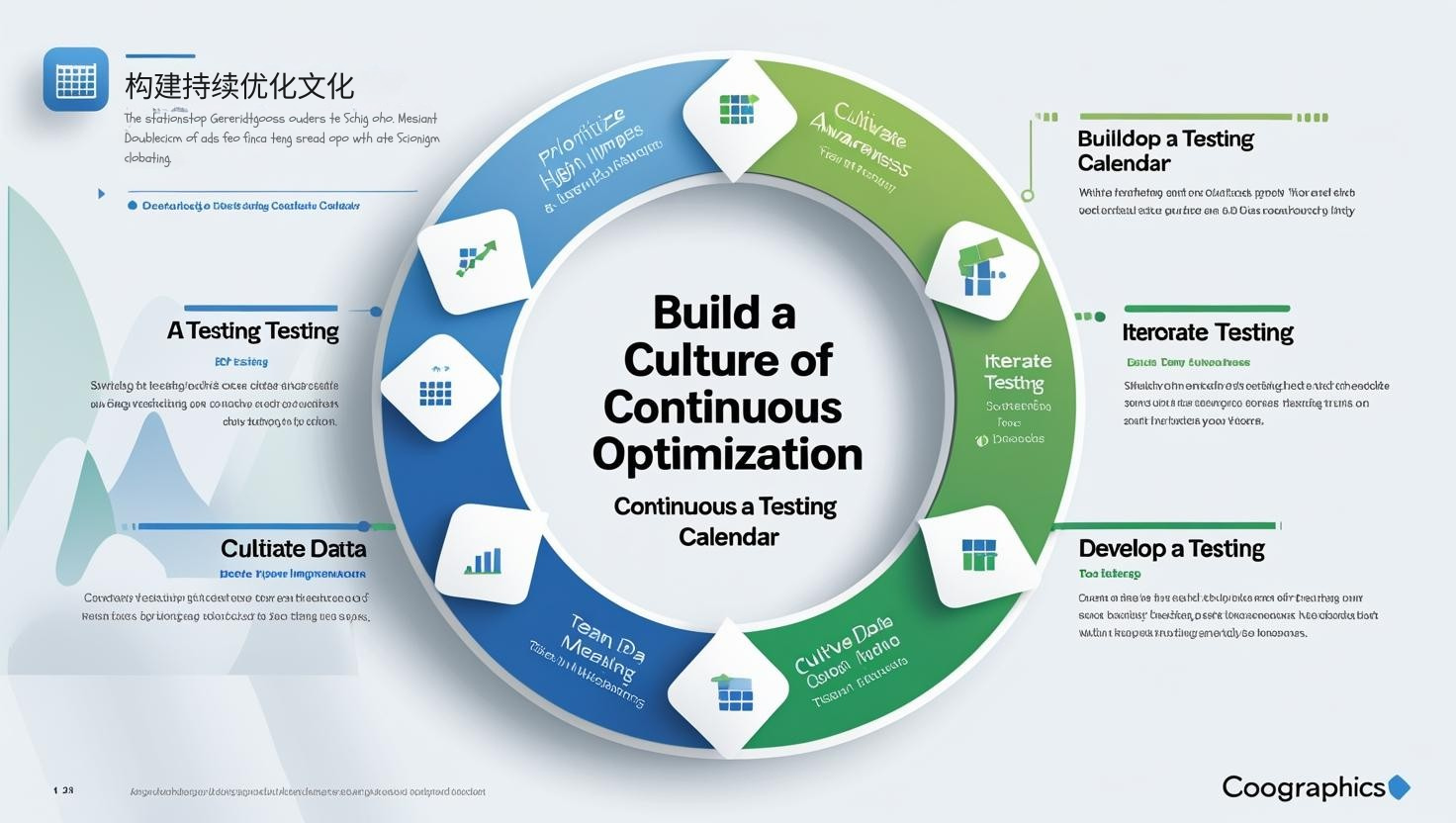

Building a culture of continuous optimization

A/B testing is not a one-time activity, but a continuous improvement process:

Develop a testing calendar : Plan long-term testing strategies, prioritizing high-impact areas.

Iterative testing : Design subsequent tests based on previous results to form an optimization loop.

Develop data awareness : Encourage teams to make decisions based on data rather than personal preferences.

Through systematic A/B testing, you can achieve continuous improvements in website performance. Even small improvements can yield significant benefits over the long term. Start testing and let the data guide your optimization journey.