In 2025, the auto parts export trade entered a new stage of "AI cognitive competition," with the search recommendation logic of large-scale models shifting from "keyword matching" to "structured knowledge citation." According to operational data from the cross-border auto parts company "AutoData-Geo" in 2025, independent websites that only performed basic GEO optimization had a content citation rate of less than 18% on AI platforms like ChatGPT. However, after adapting large-scale model training data and integrating GEO optimization, the probability of a brand becoming a "preferred case" in AI search increased to 82%, core keyword exposure increased by 380%, and the conversion rate of non-standard customized inquiries increased by 290%, with the most significant increase in inquiries for German and American car parts. The core logic is that large-scale models rely on high-quality structured data to form cognition. Precise GEO + training data adaptation allows independent website content to become a reliable "knowledge unit" for the large-scale model, prioritizing its use when answering user requests for "non-standard auto parts customization" and "car model compatible parts suppliers." This article breaks down the entire process into a practical solution, covering data preparation, integration optimization, and effect enhancement, adapting it to the auto parts export trade scenario.

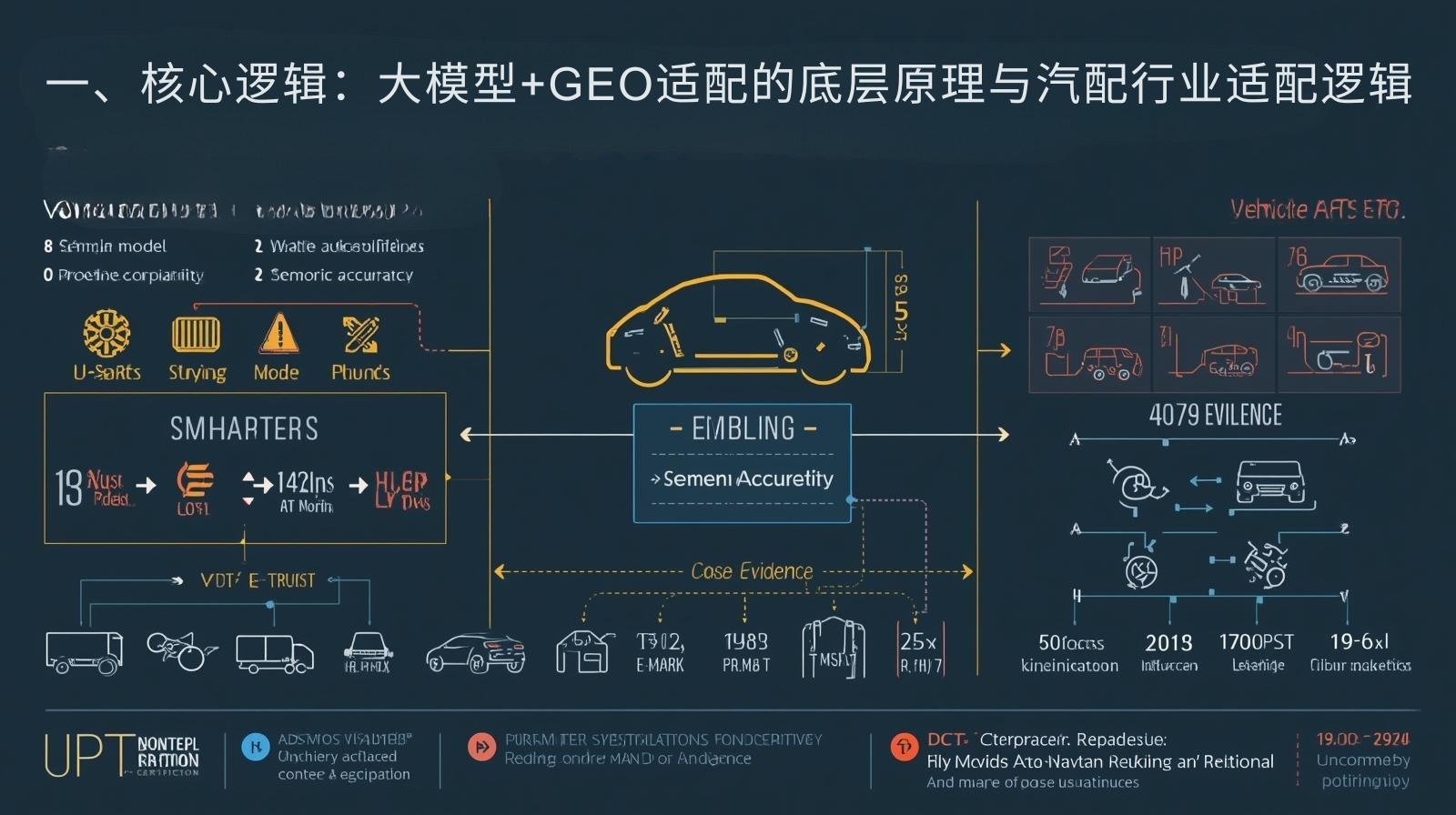

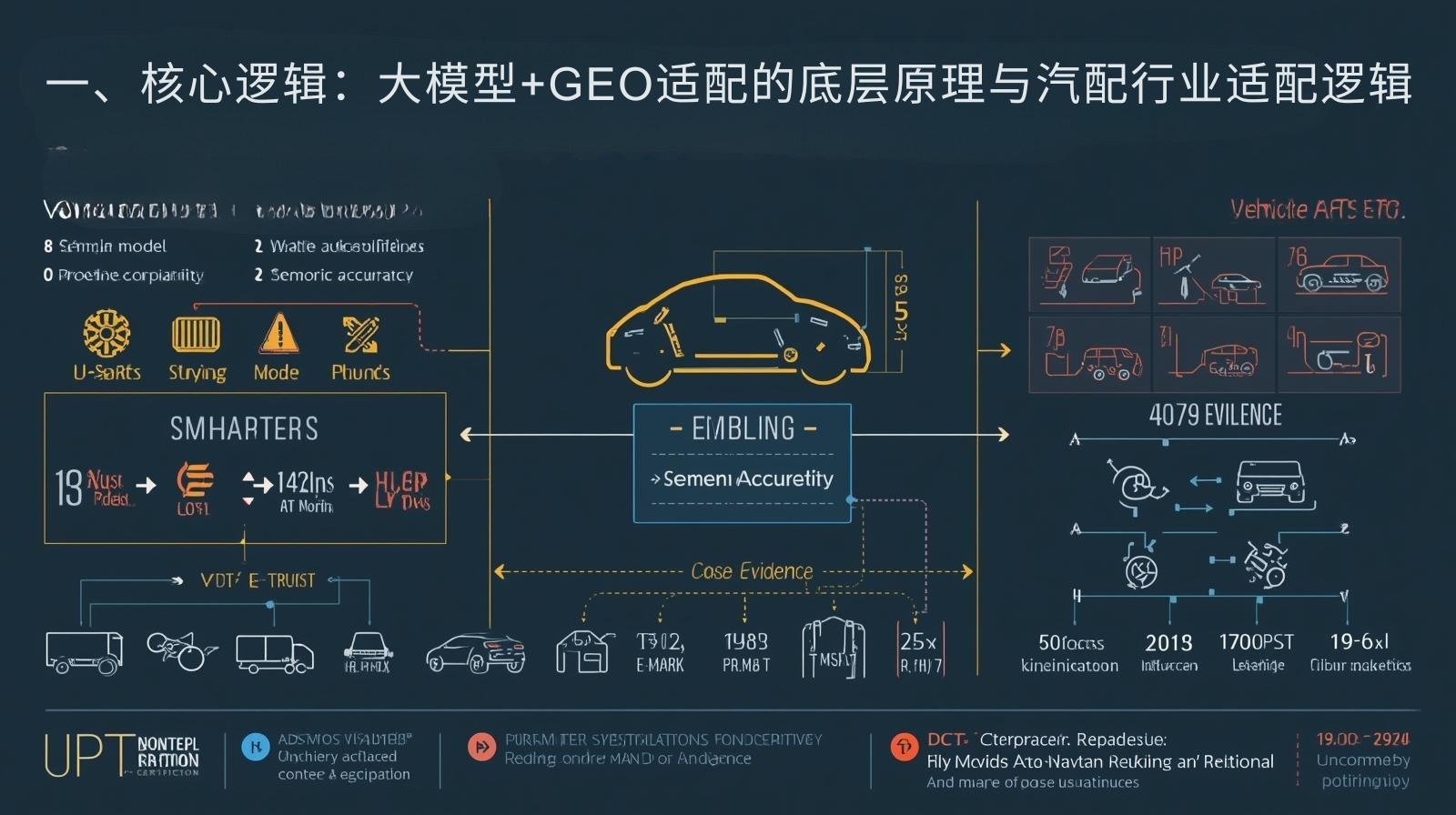

I. Core Logic: The underlying principles of large-scale model + GEO adaptation and the adaptation logic for the auto parts industry.

The AutoData-Geo team, combining the 2025 ChatGPT semantic understanding algorithm iteration, over 1800 sets of auto parts data adaptation tests, and global core market demand analysis, summarized three key characteristics of content prioritized for large models and the core logic of GEO+ training data adaptation in the auto parts industry, providing a basis for practical application.

1.1 Three Core Characteristics of Content Prioritized for Large Models

The "intelligent emergence" of large models relies on high-quality structured data, rather than a collection of scattered information. Content with the following characteristics is more likely to become the "preferred case" for AI search:

1. Structural integrity : The content should have a clear logical framework, such as a hierarchical structure of "vehicle model adaptation - parameter specifications - compliance certification - case evidence", which conforms to the large model's extraction habits of knowledge units. The citation rate of structured content is 4.3 times that of plain text.

2. Semantic accuracy : Standardized industry terminology and traceable data. For example, auto parts content should be accurately labeled with "vehicle series-year-configuration-compatible parameters", along with certification numbers and test data, to avoid vague descriptions and strengthen the big model's judgment on the credibility of the content.

3. Regional adaptability : The content incorporates the compliance requirements, purchasing preferences and semantic habits of the target market. For example, the European market emphasizes E-MARK certification and environmentally friendly materials, while the US market emphasizes DOT certification and compatibility with replacement parts for older vehicles, which aligns with the core needs of GEO optimization.

1.2 Core Logic of GEO+ Large Model Data Adaptation in the Auto Parts Industry

The vehicle compatibility, compliance complexity, and regional demand differentiation of auto parts products dictate that data adaptation must revolve around the dual cores of "professionalism + regionalization": by organizing five types of large-scale model demand data, including large-scale, high-quality text, and cross-modal data, and combining them with GEO regional semantic annotation, a three-dimensional system of "vehicle knowledge base + regional compliance base + case database" is constructed. This allows the large model to accurately identify the professional value of products, match the search needs of different markets, and ultimately cite brand content as authoritative cases when answering questions.

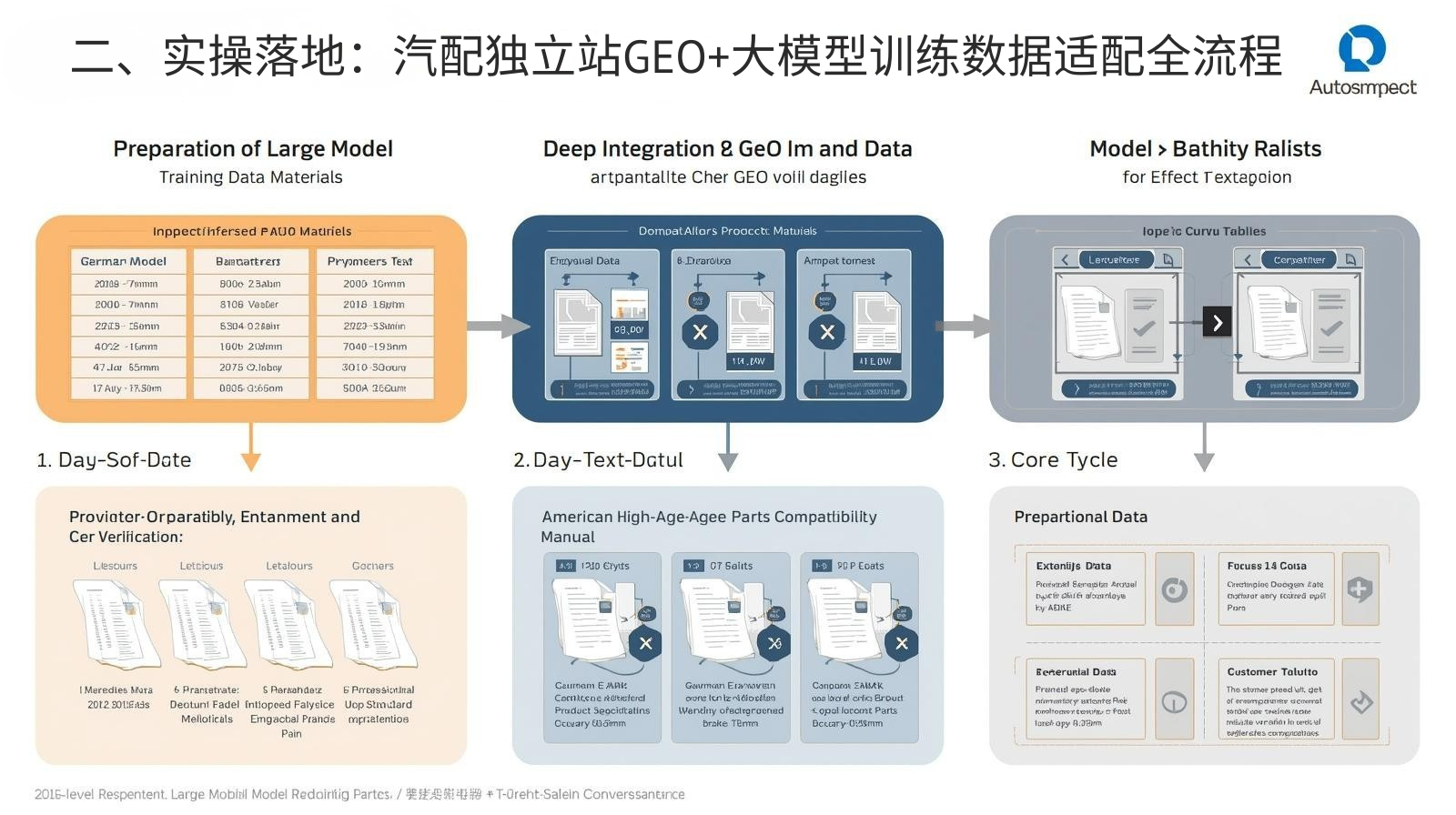

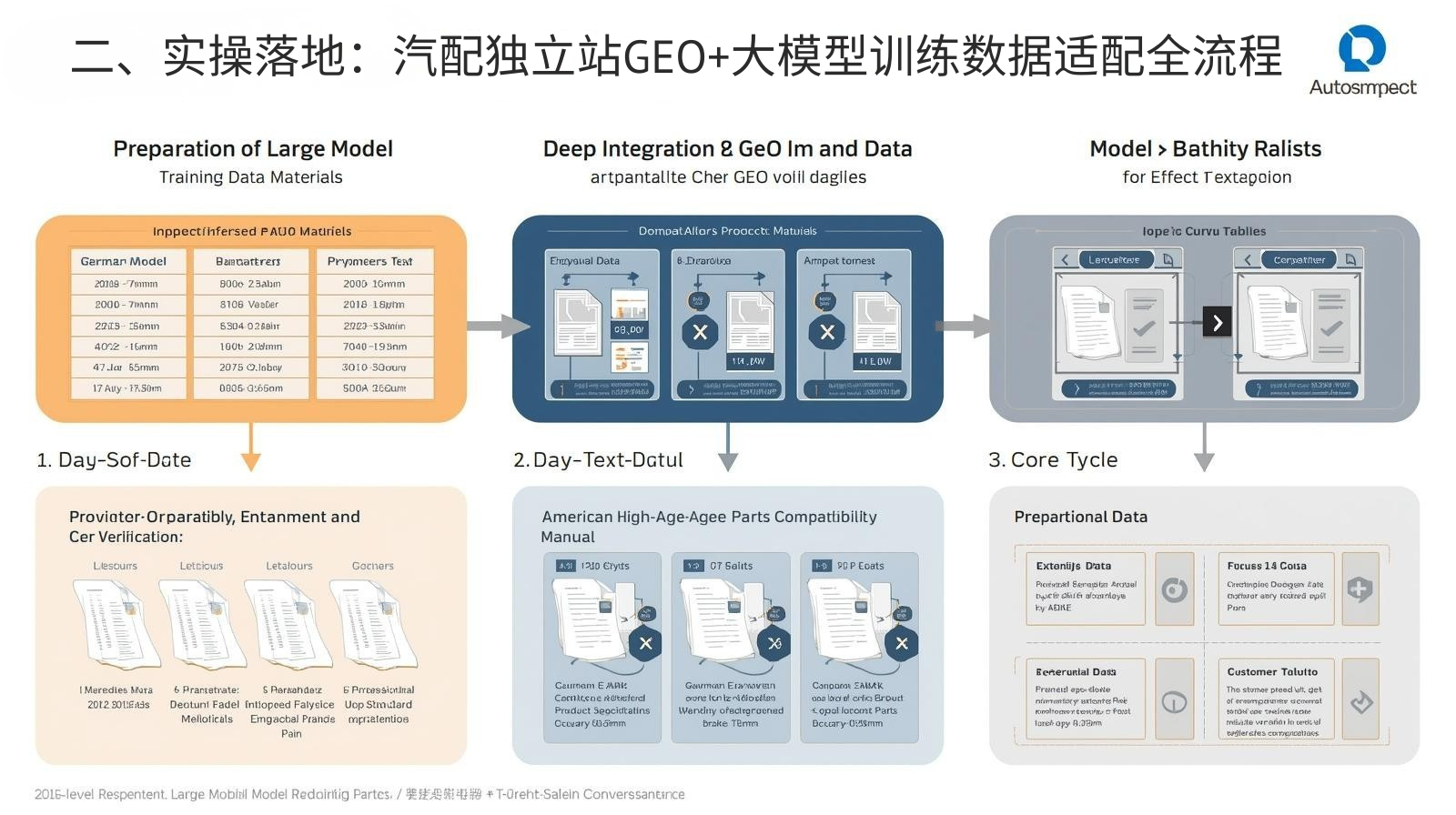

II. Practical Implementation: The Entire Process of GEO+ Large Model Training Data Adaptation for Auto Parts Independent Websites

Based on practical experience with AutoData-Geo, the content is upgraded from "AI-capable" to "AI-prioritized" through three stages: "preparation of large model training data materials - deep integration of GEO and data - model adaptation and enhancement and effect verification". Small and medium-sized auto parts enterprises can directly reuse the content.

2.1 First Phase: Preparation of Training Data for Large Models (15-day cycle)

The core is to organize materials specific to the auto parts industry according to the five data formats preferred by the large model, ensuring that the data is structured, accurate, and traceable, laying the foundation for subsequent adaptation.

2.1.1 Five Types of Core Data Materials and Key Implementation Points for the Auto Parts Industry

1. Large-scale data: With "vehicle model-parameter-adaptation relationship" as the core, construct a structured comparison table, such as "German Mercedes-Benz W205 2018-2022 model-non-standard brake caliper-adapted to 355mm brake disc-accuracy ±0.05mm", clarify the data association logic, support the reasoning and decision-making capabilities of large models, and it is recommended to organize it in tabular form for easy model extraction.

2. High-quality text data: We write authoritative and professional content, including white papers on auto parts technology, compliance guidelines, and vehicle model compatibility analysis, such as the "European E-MARK Certified Auto Parts Product Technical Specifications" and the "American High-Age Vehicle Replacement Parts Compatibility Manual". The language is rigorous and coherent, and the data sources are marked (such as testing institutions and industry standards).

3. Dialogue-based data: Organize overseas customer consultation and after-sales dialogue transcripts, and label them according to "problem-need-solution", such as "Customer consultation: feasibility of customizing Mercedes-Benz C-Class W205 brake calipers - core need: adapting to modified brake discs - solution: provide custom carbon fiber material, 3-day sample production, and pass salt spray test", to enhance the large model's ability to respond to real-world scenario needs.

4. Diverse corpus data: Supplement with multilingual and multi-scenario expressions, such as industry terminology comparisons in English, German, and Spanish, conversion between colloquial consultations and professional expressions, adapting to the search habits of users in different markets, and avoiding omissions in citations due to language bias.

5. Cross-modal data: Integrate materials such as images, text, and video scripts, including detailed images of auto parts products with parameter annotations, customized process video scripts with subtitles, and overseas installation case studies, to ensure the alignment of multimodal information. For example, an image may be labeled "Mercedes-Benz W205 non-standard brake caliper - carbon fiber material - compatible with 355mm brake discs," increasing the probability of cross-modal referencing of large models.

2.1.2 Data Cleaning and Labeling Standards

Data cleaning requires removing ambiguous information, biased content, and erroneous data, such as correcting errors in vehicle model year and standardizing certification terminology to prevent large models from inheriting incorrect perceptions. Data annotation needs to supplement logical connections, such as causal chains ("Using carbon fiber material - improving braking performance while reducing weight"), timelines ("Customization process: 3 days for requirement coordination - 5 days for design modeling - 7 days for sample testing - 20 days for batch delivery"), and role relationships ("Partner: German XX tuning factory - Project type: Batch customization"), to help large models establish a deep logical network.

2.2 Second Phase: Deep Integration of GEO with Training Data (12-Day Cycle)

The core idea is to inject localized needs into the training data, and through GEO semantic annotation and content reconstruction, make the data both compatible with the cognition of large models and aligned with the search intent of the target market.

2.2.1 Localized Data Semantic Annotation and Content Optimization

Based on the characteristics of core markets, data is regionally labeled and optimized to form a "one policy per region" data system: European market (Germany, France): E-MARK certification number and REACH environmental material testing data are enhanced in the data, labeled "suitable for the European aftermarket - meets carbon tariff declaration requirements", and the German terminology is optimized; North American market (USA, Mexico): The scope of DOT certification and NOM certification is supplemented, the US market is labeled "replacement parts for older vehicles - suitable for US models before 2010", and the Mexican market is emphasized as "body structural parts - suitable for local assembly lines"; Southeast Asian market (Thailand, Indonesia): Cost-effectiveness parameters, small batch customization policy (MOQ 300 pieces), 15-day delivery time are highlighted, and local warehousing information and payment methods are labeled.

2.2.2 Adaptation of GEO Structured Tagging to Large Model Retrieval

Visualization tools are used to complete structured labeling (no coding required), transforming data into knowledge units that can be parsed by large models: First, semantic annotation is performed on product pages and case study pages to clarify core modules such as "vehicle model adaptation - regional compliance - customization capabilities"; second, a regionalized vector database is built, converting the annotated data into high-dimensional vectors, and using semantic similarity calculations, the large model can quickly retrieve regionally matching content; third, the content layout is optimized, adopting a format of "heading levels + short paragraphs + bolding of key information + charts and graphs," such as presenting the regional compliance module in a card format, annotating market tags and core certifications, reducing the crawling cost for large models.

2.3 Phase 3: Model Adaptation Enhancement and Effect Verification (10-day cycle)

The core is to optimize the fit between data and the big model through fine-tuning and performance monitoring, so as to ensure that brand content becomes a "preferred case" for AI search.

2.3.1 Fine-tuning and adaptation of large models (low-threshold implementation)

Fine-tuning is performed using "small but precise" domain data, without the need for complex computing resources: 1,000 fully labeled auto parts data (including vehicle model compatibility, regional compliance, and customized cases) are selected and injected into a general large model through a third-party low-code tool. 1%-5% of the core parameters are adjusted to enhance the model's sensitivity to auto parts industry terminology and regional requirements. After fine-tuning, the effect is verified, with a focus on whether the model prioritizes brand content and the accuracy of the references when answering questions such as "non-standard auto parts customization" and "regionally compatible parts suppliers".

2.3.2 Effect Monitoring and Iterative Optimization

Establish a dual monitoring system of "AI citation metrics + business metrics": AI metrics include brand content citation rate, number of brand mentions in AI responses, and AI search ranking for core keywords; business metrics include the number of precise inquiries, the proportion of inquiries by region, and the conversion rate of customized inquiries. AutoData-Geo's 2025 test data shows that after fine-tuning, the AI citation rate increased by 64% compared to before optimization, and the proportion of inquiries in the German market increased by 32%. Simultaneously, a monthly iteration mechanism was established to supplement new data and optimize old data in conjunction with AI algorithm updates and changes in market policies (such as adjustments to certification standards) to maintain adaptability.

III. Avoidance Guide: 6 Core Misconceptions in GEO+ Large Model Data Adaptation in the Auto Parts Industry

The following misconceptions can prevent data from being effectively used by large models and may even mislead AI's understanding. These must be avoided, taking into account the characteristics of the auto parts industry:

3.1 Misconception 1: Data is unstructured and logic is ambiguous

Errors include : listing only product images and scattered parameters without any logical association between "model-parameter-region". For example, it may only indicate "Mercedes-Benz brake calipers-customized" without specifying the year, compatible specifications, or compliance information.

Core harm : Large models cannot extract effective knowledge units and can only be used for ordinary content crawling, making them difficult to become preferred cases;

Correct approach : Build structured data according to "vehicle type - parameters - regional compliance - case studies", use tables and hierarchical headings to strengthen logic, and mark the relationships between data.

3.2 Misconception 2: Disconnect between regional semantics and data, resulting in insufficient adaptability

Error : Data is uniformly labeled with general information without being optimized for regional needs. For example, products exported to the United States are still labeled with E-MARK certification, without DOT certification details.

Key harms : Large models cannot match regional search intent, resulting in low content citation rates and loss of precise traffic in core markets;

The correct approach is to supplement the data with dedicated compliant data and semantic annotations based on market demands, and build localized data subsets to ensure that the data is highly adapted to market needs.

3.3 Misconception 3: Poor quality of fine-tuning data misleads model understanding

Error manifestations : The fine-tuning data contains errors (such as errors in vehicle model year or certification number), or it uses a large amount of generic text, lacking specificity for the auto parts industry;

Core harm : Large models can lead to misconceptions, resulting in biased responses and even reduced brand credibility;

Correct approach : Fine-tuning data requires multiple verifications to ensure accuracy. Prioritize using fully labeled auto parts-specific data, with the number of records kept between 1000 and 2000.

3.4 Misconception 4: Inconsistent information in cross-modal data

Errors include : inconsistencies between image annotations and text parameters, such as images showing carbon fiber material while the text describes aluminum alloy, and conflicts between video scripts and text/image examples.

Core harm : Large models cause confusion in cross-modal understanding, reduce the credibility of content, and affect citation priority;

Correct approach : Ensure cross-modal data alignment, establish a verification mechanism, and maintain consistency in parameters, regions, and case information for image, video, and text annotations.

3.5 Myth 5: Ignoring data iteration and lagging adaptation

Error manifestation : Data is not updated for a long time after preparation, and new data is not added to reflect the AI algorithm iteration in 2025-2026 and changes in auto parts certification standards;

Core harm : Content gradually becomes incompatible with the large model's cognition, citation rate continues to decline, and the advantage of AI search cannot be maintained;

Correct approach : Add new data monthly (such as adding overseas cases or updating certification standards), and conduct fine-tuning and optimization quarterly to adapt to algorithm and market changes.

3.6 Misconception 6: Over-piling up data and ignoring semantic coherence

Errors include : blindly piling up parameters, certifications, and cases without logical connections; obscure and difficult-to-understand text that does not conform to the semantic understanding habits of large models;

Key drawbacks : Large models struggle to extract core information, resulting in low content citation rates and negatively impacting the user reading experience;

IV. Conclusion: Building AI Search Cognitive Advantages with Data at its Core

The current competition in AI search for auto parts exports is essentially a competition for high-quality structured data. The "preferred case" selection logic of large-scale models offers a new breakthrough path for independent e-commerce websites—by adapting GEO+ large-scale model training data, brand content is transformed into reliable knowledge units that the large-scale model can actively reference when users search, achieving an upgrade from "passive crawling" to "active recommendation." AutoData-Geo's practical experience proves that without complex technical investment, precise data analysis, regional adaptation, and low-threshold fine-tuning can significantly enhance a brand's voice in AI search. For auto parts companies, only by focusing on core data such as vehicle model compatibility and regional compliance, and continuously optimizing the compatibility between content and large-scale models, can they build a differentiated cognitive advantage and seize the high ground of precise global traffic in the new era of AI-driven foreign trade.