In the fiercely competitive e-commerce environment, independent websites face the challenge of continuous optimization. According to Shopify's research data, e-commerce websites that implement A/B tests can increase conversion rates by an average of 23%. A/B testing, as a scientific optimization method, allows you to verify hypotheses in a real environment and avoid subjective decisions based on personal preferences. This article will share practical experience in A/B testing to help you systematically improve website performance.

How to confirm there is Value test items

Value test items

Not all tests are worth investing time and resources. The following factors are considered when choosing a high-value test project:

-

Data-driven problem identification: Analyze website data and find out the bottlenecks in the conversion funnel. For example, if the product page has high visits but low conversion rates, this may be a priority area for testing.

-

Potential impact assessment: Prioritize testing of elements that affect the core conversion path. Select projects according to the principle of "testing areas that bring the greatest improvement first".

-

Implementation difficulty consideration: Balance potential benefits with implementation costs and choose tests that are easy to implement but may lead to significant improvements at the beginning.

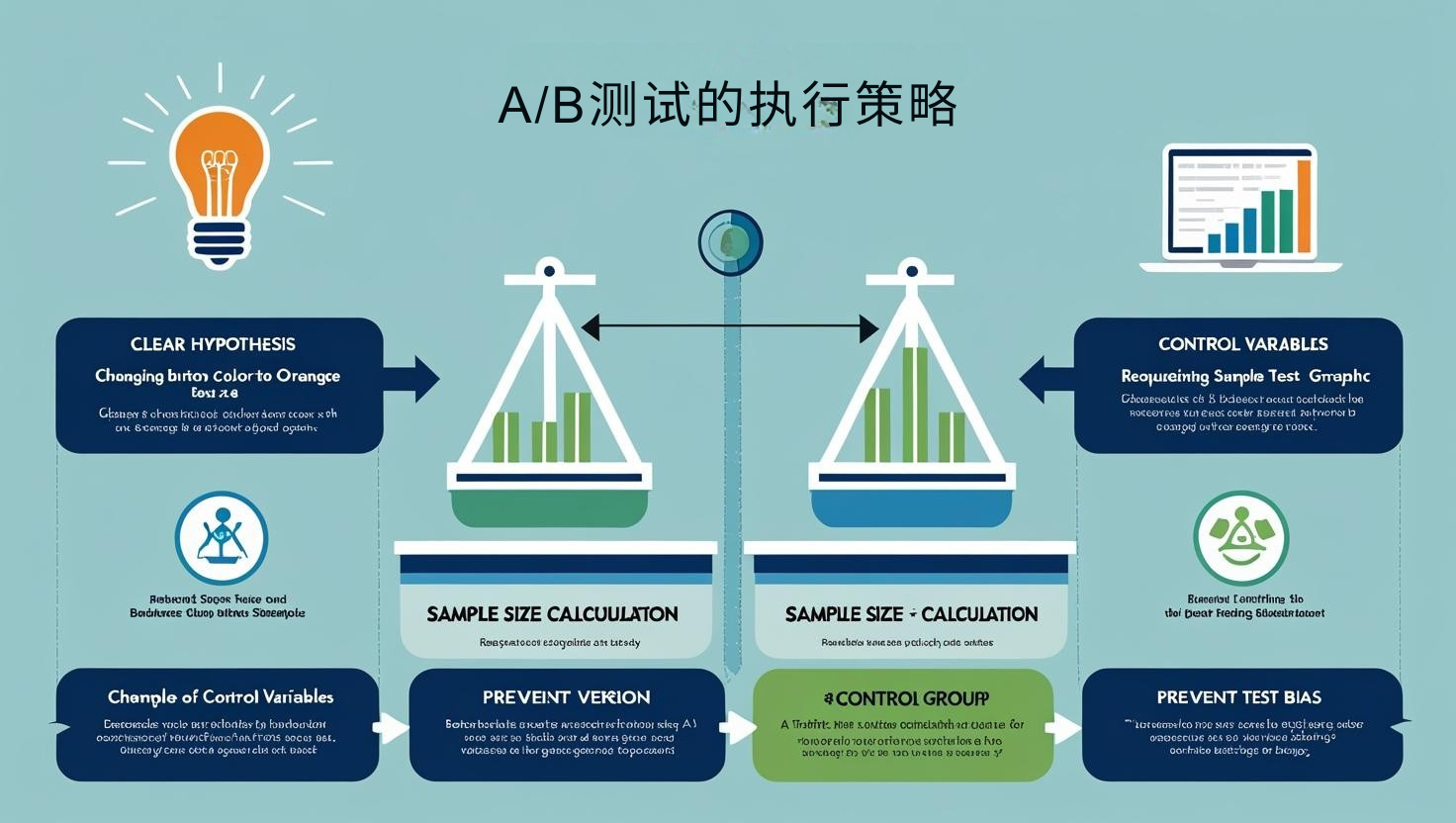

Execution strategy for A/B tests

Effective A/B testing requires strict execution process:

-

Clear assumptions: Each test should be based on clear assumptions such as "changing the button color to orange will increase click-through rate".

-

Control variables: Make sure to test only one variable at a time to avoid being unable to tell which changes lead to differences in results.

-

Sample size calculation: Determine the duration of the test required to achieve statistical significance based on your website traffic using the sample size calculator. CXL studies show that about 60% of A/B tests draw false conclusions due to insufficient sample size.

-

Prevent test deviations: Ensure that the flow allocation between the test and control groups is random, and avoid time or equipment deviations.

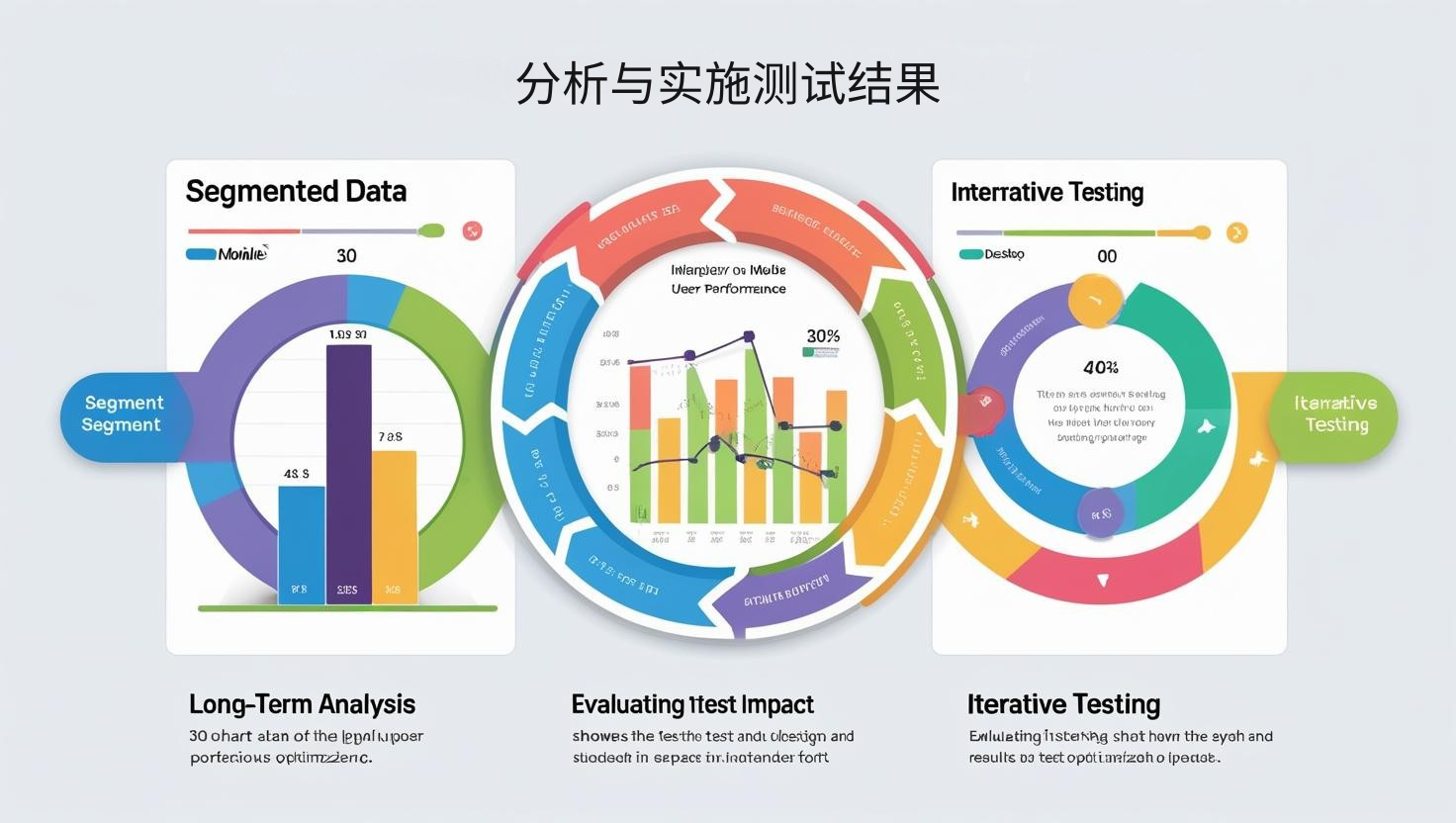

Analysis and implementation of test results

Analysis after the test is completed is equally important:

-

Segmented data analysis: Not only do you look at the overall results, but you also need to analyze the performance of different user groups. For example, a variant may work well for mobile users but not well for desktop users.

-

Long-term impact assessment: Certain changes may lead to short-term improvements but long-term declines. According to ConversionXL's research, it is recommended to track important changes for at least 30 days.

-

Iterative testing: Integrate the results of successful tests into the next round of tests to form a continuous optimization cycle.

Conclusion

A/B testing is not a one-time activity, but a core strategy for continuous optimization of independent stations. Through a systematic testing process, you can continuously improve your user experience and improve your conversion rate. Remember that even seemingly minor improvements can bring significant cumulative benefits in long-term operations. Start testing, speak with data, and let your independence stand out from the competition.